The Layoff Criterion Nobody Is Auditing

Somewhere in the middle of 2025, American tech firms started measuring their employees on whether they were using AI. The metric was never really about whether the work was getting done faster or better, it was about whether the employee had clocked visible hours on the tool, logged into the dashboard, shown up to the training. A Resume Builder survey of 1,342 managers published in June 2025 found two thirds of AI-using managers were using AI to help make layoff decisions, with more than one in five letting it make the final call without a human reviewing it. A separate Resume.org survey of 1,000 U.S. business leaders in September 2025 flagged employees lacking AI skills among the groups most likely to be cut in 2026. The tool had gone from optional to graded to, in some places, adjudicating, inside about a year.

The story that got told about this, over and over, was about productivity and transformation. The story that did not get told is what happens when you install a retention criterion on top of a workforce that was already being cut unevenly, and the criterion itself turns out to be unevenly distributed in the same direction. Pull the numbers together and women are about to get hit twice by the same process, through two different mechanisms, for reasons nobody has to sign their name to.

The first hit

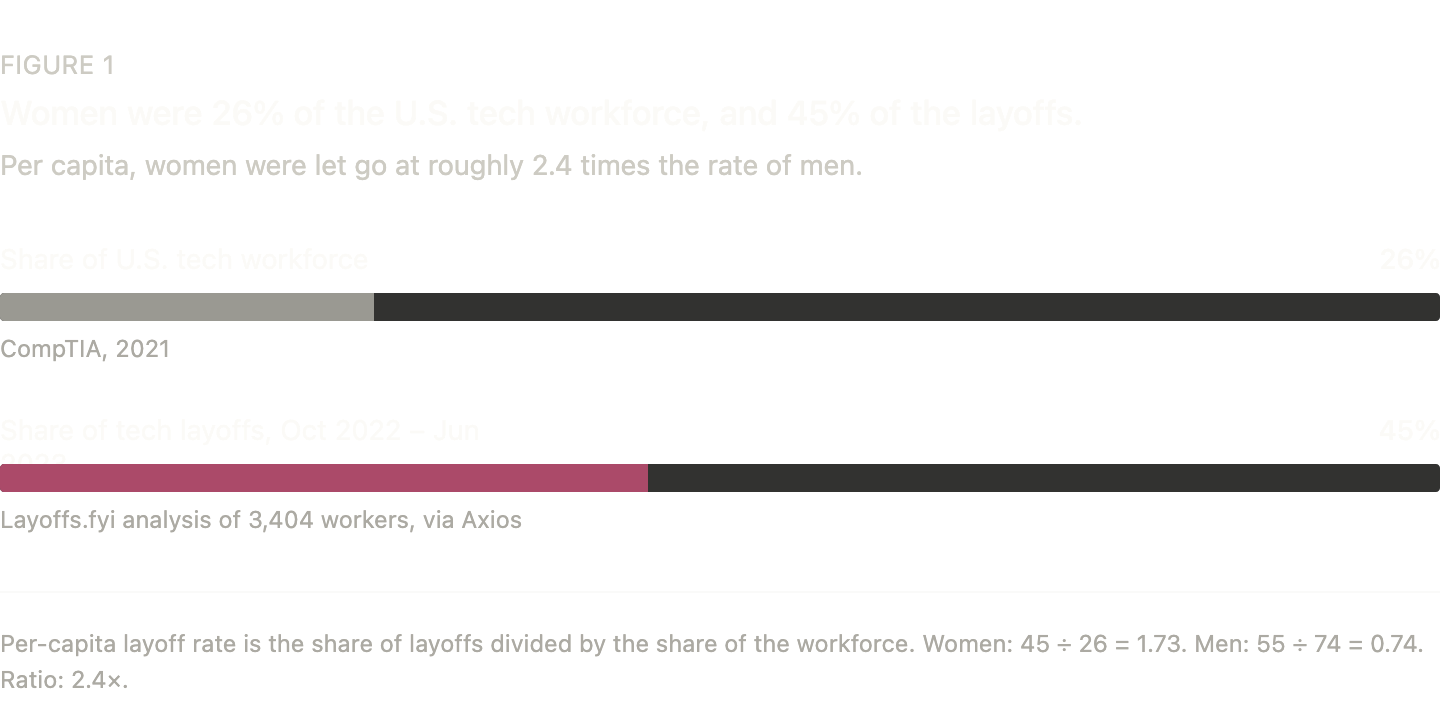

Tech was cutting women at a disproportionate rate well before an AI dashboard entered a performance review. Between October 2022 and June 2023, a Layoffs.fyi analysis of 3,404 workers reported by Axios found women took 45% of the cuts. Revelio Labs, running different data over September to December 2022, came in at 47%. Women are around 25 to 26% of the U.S. tech workforce per CompTIA, which means their share of the layoff pile was close to double their share of the desks, and on a per-capita basis they were being let go at roughly two and a half times the rate men were. Most of this analysis infers gender from names, so treat the numbers as directional, but every serious cut of the data says the gap is real and large.

The gap had structural causes. Customer success, HR, recruiting, support, and marketing took the hardest hits, and those were the departments where women had been clustered, partly by choice and partly by a long industry habit of funneling them away from engineering and into functions that get called non-technical when the business is healthy and non-essential when the axe comes out. The composition of the cuts was a byproduct of where the axe was falling, and the story lived in a few Medium posts before the industry moved on.

That was the first hit. What nobody did when they moved on was ask whether the same group might be positioned for a second one, because the next round of layoffs was going to be sorted on an entirely different criterion.

The new criterion

The companies installing that criterion have mostly stopped being coy about it. Coinbase CEO Brian Armstrong went into the company's all-hands Slack in early 2025, per FinalRoundAI's reporting, and told every engineer to onboard to Cursor and Copilot by the end of the week, then fired the ones who skipped the Saturday catch-up session. Shopify's Tobi Lütke told staff in a March 2025 memo, posted to X before it could leak in bad faith, that "reflexive AI usage is now a baseline expectation," that AI usage questions would be added to every performance and peer review, and that teams asking for headcount would first have to prove the work could not be done with AI. Meta will make AI usage a formal part of every performance review from 2026, per WinBuzzer. Amazon Web Services has rolled out per-developer dashboards tracking exactly how much AI each engineer uses, which the company describes as "not yet" part of formal reviews, the kind of phrasing that tells you what it will be by this time next year. KPMG, Accenture, Nvidia, and McKinsey are on the same path. Duolingo tried it and pulled back in April 2026 after internal pushback, and every other firm has presumably seen similar pushback and decided it can absorb it.

The criterion is starting to shape who gets hired as well as who gets kept. A Nexford University survey of 1,000 workers found 49% of employers were now more likely to retain workers with strong AI skills, and 29% of hiring managers were exclusively hiring people already fluent. Two years ago AI fluency was a skill you could pick up on a Sunday afternoon. Now your manager is scoring you on it.

The second gap

So who is clocking hours on the tool. Harvard Business School's 2025 meta-analysis of 18 studies covering 143,008 people across 25 countries found women had 22% lower odds than men of using generative AI, a gap the authors flagged as "nearly universal." The Federal Reserve Bank of New York's 2024 survey had roughly half of men having used generative AI in the previous year against about a third of women. The direction is consistent everywhere the question has been asked.

The behavior the metric rewards has been getting quietly discouraged in exactly the workers the metric is now about to measure.

The easy read is that women are less interested in AI, which is the reading the research refuses to support. Lean In's 2025 work found women in the workplace are 23% less likely to receive manager encouragement to use AI, men are 27% more likely to be praised for using it on the job, and a separate Harvard Business Review study of engineers found women producing identical AI-assisted work were rated as less competent than their male colleagues. What the dashboard is picking up is a response, roughly the one you would expect in a room where using the tool gets your male colleague a gold star and gets you a raised eyebrow. The behavior the metric rewards has been getting quietly discouraged in exactly the workers the metric is now about to measure.

Hit twice

Stack the two things on top of each other. A group that is 25 to 26% of the tech workforce has been taking 45 to 47% of the layoffs, for reasons partly about which departments got culled and partly about where the industry had been placing women for twenty years. That same group is now walking into a second round in which the retention criterion is AI usage, a tool they are documented to be using less than men and getting a cooler reception for using when they do. The first mechanism was about where women sat, the second is about what women are being scored on at the desks they still have, and the overlap between who got the worst of the first and who is positioned for the worst of the second is not coincidence so much as the same group running the gauntlet twice, with the second run happening on a track the first run helped shape.

The second hit is also, crucially, invisible in a way the first one was not. The 2022 layoff gap showed up because entire departments full of women got cleared at once, and the pattern was legible from a Layoffs.fyi spreadsheet. A retention score ticking down quietly, month by month, inside each employee's performance file is not that kind of pattern. It produces the same outcome, spread over a longer period, with no single event loud enough to count.

Nobody sat down and drew up a filter designed to hit one group twice, which is the part that makes it worse rather than better. The filter was assembled out of a dozen reasonable-sounding decisions by a dozen reasonable-sounding people, each of them able to defend their piece in a meeting without raising their voice, and the thing it produces at the end is the thing nobody has to put their name on. Meta's C-suite is men, so is Coinbase's, so is Shopify's. Roughly three quarters of tech managers are men, and if you are inside that three quarters, the metric looks like it is working, because it is, on you. The uneven distribution is only visible if you are standing somewhere else, and most of the people installing the metric are not.

What the paperwork will say

The system gets quietly tidy on its own behalf. Firings tied to an AI-usage score show up as performance-based exits, one at a time, on individual personnel files that no outside party ever sees. New York became the first state to require WARN Act filings to disclose whether technology had caused layoffs, and per Avi Goldfinger's reporting at Medium, out of 162 filings covering 28,300 workers, including Amazon, Goldman Sachs, and Morgan Stanley, precisely zero checked the AI box. A Forrester study found that 55% of employers who had already made AI-attributed layoffs regretted the framing, which reads less like remorse and more like a note to self about public relations for next time. Even for mass layoffs there is an escape hatch: the federal WARN Act lets employers either give 60 days' notice or pay the 60 days in lieu, and companies can stage cuts into smaller tranches to duck the threshold entirely. The record of why people were cut rarely exists anywhere an outside party can read.

The woman let go in the coming round will be told, if she asks, that the decision was performance-based, which in the narrow technical sense is true. She will not be told that her score included an AI-usage component, that the component was gathered by a dashboard that could not tell a person using the tool less from a person being discouraged from using it more, or that the manager weighing her score was collecting praise for the same behavior she was being marked down for. By the time the pattern is legible enough to audit, the audit will be running against a record the audited parties wrote themselves, with the inconvenient boxes quietly unchecked.

The 2022 gap took one guy with a spreadsheet to surface, and even then it barely registered before the industry moved on. This gap has no spreadsheet waiting. It is being written into HR systems that do not open to the public, measured by managers whose own blind spots are the point, and distributed across enough individual decisions that no single one of them will ever look like the thing it is adding up to. A scandal needs somebody to blame, and this process has been arranged so that nobody has to sign it. Which is a tidy way of producing the same outcome with none of the liability.

Sources

- Layoffs.fyi and Axios, gender breakdown analysis of 3,404 laid-off tech workers, October 2022 to June 2023

- Revelio Labs, tech dismissals by gender, September to December 2022

- CompTIA, women as share of the U.S. tech workforce

- Harvard Business School, meta-analysis of 18 studies on generative AI gender usage, 143,008 individuals across 25 countries, 2025

- Federal Reserve Bank of New York, generative AI usage survey, 2024

- Lean In, research on manager encouragement, praise, and workplace AI adoption, 2025

- Harvard Business Review, study on gendered perceptions of AI-assisted engineering work

- Resume Builder, survey of 1,342 U.S. managers on AI in layoff decisions, 2025

- Nexford University, survey of 1,000 respondents on AI skills and retention

- BetaKit, Fortune, CNBC, and Forrester, reporting on Shopify's March 2025 AI memo

- WinBuzzer, reporting on Meta's 2026 AI performance review policy and Duolingo's April 2026 reversal

- FinalRoundAI, reporting on Coinbase's Saturday AI onboarding meeting

- Avi Goldfinger on Medium, New York WARN Act technology disclosure filings analysis, 2025

- U.S. Department of Labor, WARN Act 60-day notice and pay-in-lieu provisions