AI Is Not Original, And It Can't Be

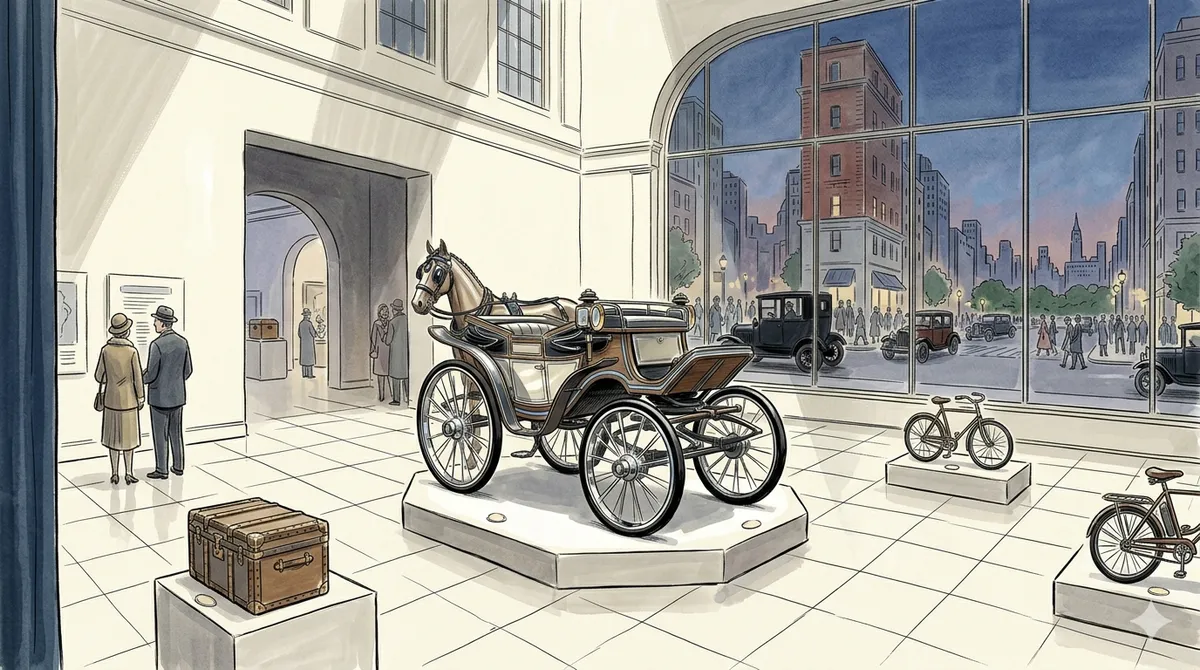

Generative AI predicts the most statistically likely output from its training data, which means everything it creates pulls toward the middle of what already exists. If the technology had existed in the age of the horse and cart, it would have given you a better cart, never a car.

There is a lot of hype around AI right now. A lot. If you can think of a task there is somebody out there claiming that AI can do it, and they will happily sell you their product, their service, their course, their newsletter, their community, and probably their bathwater to prove it. Coding, design, strategy, writing, running your business, managing your life, raising your kids (okay maybe not that last one yet, but give it six months and someone will try).

The productivity claims behind this hype are thinner than the headlines suggest, but the limitations go deeper than ROI numbers.

This is great. This is awesome. We're inches away from our utopian dream. No more working for me, just setting up my AI agents and letting them bring in the dollars while I sit on a beach somewhere watching the revenue roll in like a man who's hired a personal chef and now reckons he can win MasterChef by forwarding the brief.

But there is one small thing that might stop this dream from happening in its current form, and it's not a small thing at all.

AI is being marketed, sold, and deployed as a replacement for human thinking. The pitch is that these tools can do what you do, but faster, cheaper, and without needing a lunch break. And for certain tasks that's genuinely true. But the pitch quietly skips over something fundamental: these tools cannot replicate human thought, and they never will be able to in their current form. Not because the technology isn't impressive, it absolutely is, but because the way humans actually operate, the context we carry, the observations we make, the feelings and instincts and experiences that inform every decision we take, none of that is quantifiable in the way that a model needs it to be. We are not automations following a script. We are messy, contradictory, context-dependent creatures who make connections that no dataset can capture, and that difference is not a flaw to be engineered out, it's the entire reason we're useful.

Understanding why requires understanding what "AI" actually is right now, and what it isn't.

So What Is Everyone Actually Talking About

When most people say AI what they're really talking about is Generative AI, GenAI, and the difference matters more than most of the hype merchants would like you to think about.

GenAI is a type of artificial intelligence that generates new content (text, images, code, music, whatever) based on patterns it has learned from enormous amounts of existing data. When you ask ChatGPT a question, or get Midjourney to make you an image, or ask Claude to write you some code, you're using GenAI. It has consumed an almost incomprehensible volume of human-created content, learned the statistical relationships between words, pixels, and concepts, and when you give it a prompt it generates a response by predicting, one piece at a time, what should come next based on everything it has seen before.

That last bit is the important bit. Based on everything it has seen before.

GenAI does not think. It does not reason in the way you or I reason. It does not sit there stroking its chin and pondering whether there might be a better way to approach a problem. What it does, and it does this extraordinarily well, is recognize patterns in existing data and produce outputs that are statistically likely to be what you're looking for. It is the world's most sophisticated autocomplete. That's a reductive way of putting it and the engineers who build these models would probably want to throw something at me for saying it, but at a fundamental level that is what is happening. The model is not understanding your question, it is predicting what a good answer looks like based on the millions of answers it has already seen.

This is different from the concept of Artificial General Intelligence, AGI, which is the idea of a machine that can actually think, reason, learn, and apply knowledge across domains the way a human can. AGI doesn't exist yet. It might not exist for decades. It might never exist. But it's the thing people picture in their heads when they hear "AI" because we've all watched the same movies, and the gap between that picture and what's actually sitting on your screen right now is roughly the size of the Grand Canyon wearing a fake mustache pretending to be a crack in the pavement.

This gap between perception and reality matters because companies are already using the AI narrative to restructure entire workforces around capabilities the technology does not have.

The Middle Of The Road Problem

So why does this matter for anyone who isn't an AI researcher or a computer science nerd?

Because the way GenAI works creates a very specific limitation that affects everything it produces, and once you see it you can't unsee it. GenAI, because it is drawing on the average of its training data, has a gravitational pull toward the middle. It converges on the most common, the most expected, the most statistically probable output. And that means everything it produces tends to land in a very crowded, very beige part of the spectrum.

Think about it this way. If GenAI had existed in the age of the horse and cart, and you asked it to design the future of transportation, it would have given you a really well-optimized horse and cart. Smoother wheels, maybe. Better axle design. A more aerodynamic carriage shape based on the thousands of carriages it had analyzed. It would have given you the best possible version of what already existed, because that is all it can do. What it would never, in a million years, have given you is a car. Because a car didn't come from optimizing the horse and cart, it came from someone stepping back and asking an entirely different question: what if we didn't need the horse at all?

That is the difference between optimization and originality, and GenAI can only do the first one.

You see this everywhere once you start looking. Ask any of the major AI coding tools to build you something and what you'll get back is functional, often impressively so, but it will be the most common way to solve that problem. The architecture, the patterns, the libraries, the approach, they'll all be whatever the plurality of developers on Stack Overflow and GitHub were doing when the model was trained. You'll get code that works in the same way that a flat-pack wardrobe works: structurally fine, does the job, looks exactly like everyone else's.

Design is the same story but more visible. Ask an AI to design a landing page and you will get something that looks like the average of every landing page it has ever seen. The hero section will be where you expect it. The call to action will be where you expect it. The color palette will be tasteful and forgettable. It will look professional in the way that a hotel lobby looks professional, technically fine but you couldn't pick it out of a lineup if your life depended on it. This is why AI-generated design has a "look" that people are starting to recognize, that slightly uncanny smoothness, like someone laminated a mood board and called it a brand.

The convergence toward the middle is not just an aesthetic problem. Inside companies, layoff survivors are already optimizing for the safe and expected, and the same gravitational pull toward average that makes AI outputs feel beige is now shaping the humans too.

And then there's ideas. This is where the limitation really bites because this is where people most want AI to be magic. Ask a GenAI tool to brainstorm product ideas, marketing strategies, solutions to a business problem, and what you'll get is a recombination of existing ideas. Sometimes a clever recombination, sometimes a surprising-looking one, but always built from pieces that already existed. The model cannot look at a problem and see something that isn't in its training data, because its training data is all it has. It's like asking a librarian who has read every book in the building to write something that isn't influenced by any of them. The request doesn't even make sense.

Why Humans Are Not Automations

Here's where the "AI replaces humans" pitch falls apart if you actually think about it for more than thirty seconds.

Your day, whatever you do for a living, is not a series of isolated tasks that can be broken down into inputs and outputs. It is a constant, messy, overlapping stream of context, observation, and feeling that you process mostly without even noticing you're doing it. You walk into a meeting and within seconds you've clocked that your colleague is having a bad day from the way they're holding their coffee, that the client is nervous because they keep checking their phone, that the energy in the room is off and maybe now isn't the time to push the ambitious proposal. None of that is in a dataset. None of that is quantifiable. And all of it informs the decision you make next.

That is not a nice-to-have. That is the thing that makes human judgment human judgment. The person who invented the car wasn't just optimizing transportation, they were responding to a lived experience of what it felt like to travel slowly, to be limited, to watch the world stay small because the fastest you could move was the speed of a horse. That frustration, that restlessness, that specific human irritation of knowing something could be better without being able to prove it yet, that's where originality comes from. And you cannot train a model on it because it doesn't exist in the training data until after someone has already acted on it.

The breakthroughs that actually changed the world almost never came from averaging what already existed. The iPhone didn't come from surveying every phone on the market and finding the statistical midpoint. Punk didn't emerge from an analysis of the most popular chord progressions of 1975. Airbnb didn't come from studying the hotel industry and optimizing for the most common guest experience. These things came from people who saw the existing landscape, felt something was wrong with it or missing from it, and built something from that feeling. GenAI cannot feel that something is missing. It can only work with what's there.

So Where Does That Leave Us

None of this means AI is useless, and anyone who tells you it is has probably not spent five minutes actually using it. GenAI is a phenomenally powerful tool for accelerating work that falls within known patterns. It's brilliant for first drafts, for boilerplate, for getting 80% of the way to something that a human can then push the last 20% on. It's brilliant for taking the horse and cart and making it the best damn horse and cart you've ever seen. And that's genuinely valuable, because most of the work that needs doing on any given day is exactly that kind of work.

But the moment you need someone to look at the horse and cart and think "what if we got rid of the horse," you need a human. You need someone carrying context that can't be scraped from the internet, making observations that can't be reduced to tokens, acting on feelings that no model can replicate because they come from the specific, unrepeatable experience of being a person in the world.

So the next time someone tells you AI is going to replace you, ask them which part. If it's the part where you follow established patterns and execute known solutions, yeah, they might have a point, and that's probably fine because that part was always a bit boring anyway. But if it's the part where you come up with the thing that nobody saw coming, the part where you connect something that has never been connected before, the part where you look at the way things are and feel in your gut that they could be different, then relax. Pour yourself a drink. The machines are impressive, but they're building you a really nice cart while you're busy inventing the engine, and the people selling you the dream that they can do both would quite like you to not think too hard about why they can't.