The Great AI Misdirection: "Regulation Can't Keep Up"

AI regulation in America is not a missed opportunity but rather exactly what is being optimized for - money. This article explores why and the fundamental differences in how the EU and USA treat their citizens.

There is a story people tell about AI regulation, and it goes like this: the technology is moving too fast, governments can't keep up, regulators are overwhelmed, and by the time anyone writes a law the thing they're trying to regulate has already evolved into something else entirely. It is a story the technology industry tells the way a fox expresses sympathy about the henhouse door while licking feathers from its teeth, and it has become the default framing for almost every conversation about AI governance in Washington, in boardrooms, and across newspaper opinion sections where retired executives write thoughtful columns about the need for "thoughtful regulation" that never quite arrives.

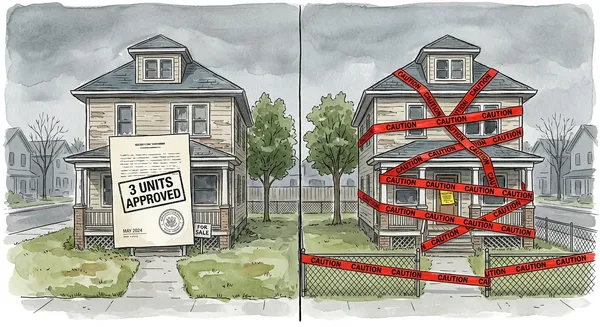

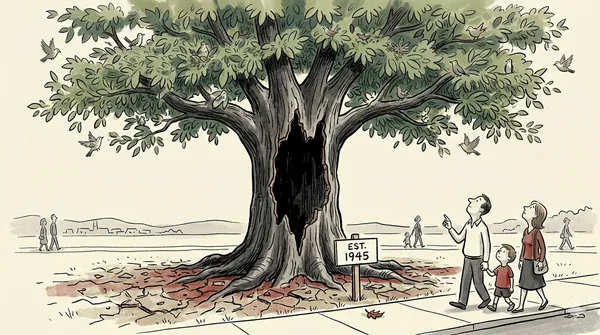

American regulation is working exactly as designed, and what it is designed to do is protect the interests of the companies building AI, not the citizens living with its consequences. Twenty years of social media already proved this, with evidence so comprehensive it could fill a congressional hearing room, which it did, multiple times, to no effect whatsoever. And now the same system, having learned precisely nothing it didn't intend to learn, is running the same experiment again with a technology whose capacity for both profit and damage makes social media look like a warm-up act.

In May 2023, the EU fined Meta €1.2 billion for violating the data rights of European citizens. Eight months later, on January 20, 2025, the incoming US president revoked his predecessor's AI safety executive order before the desk chair was warm, and by December had directed the Department of Justice to create a task force whose explicit purpose was suing American states that tried to regulate AI on their own. Same companies, same documented harms, opposite responses.

What social media settled

Mark Zuckerberg sat before nearly a hundred lawmakers in April 2018, after Cambridge Analytica harvested the data of 87 million users, and testified for four hours and fifty-four minutes, the most elaborately staged piece of political theater since the invention of C-SPAN, a spectacle so devoid of consequence it could have sold tickets as prestige television about institutional failure. Senators asked questions that revealed they didn't understand the product, Zuckerberg gave answers that revealed he understood the senators perfectly, and everyone went home having accomplished nothing except footage that would age like milk.

The verdict underneath is no longer contested by anyone who has looked at the record: unregulated platforms, left to optimize for engagement without constraint, will reliably produce outcomes that are profitable for the platform and corrosive for everyone else, because engagement and harm turn out to be roommates who split the rent and throw the same parties. Voluntary self-regulation, the thing every tech CEO promises in the hearing room the way a teenager promises to clean their bedroom while already rehearsing the excuse, has never produced a clean bedroom. The harms (teenage mental health, election integrity, the ability of a society to agree on what day it is) are real and measurable and fall hardest on the people with the least power to opt out.

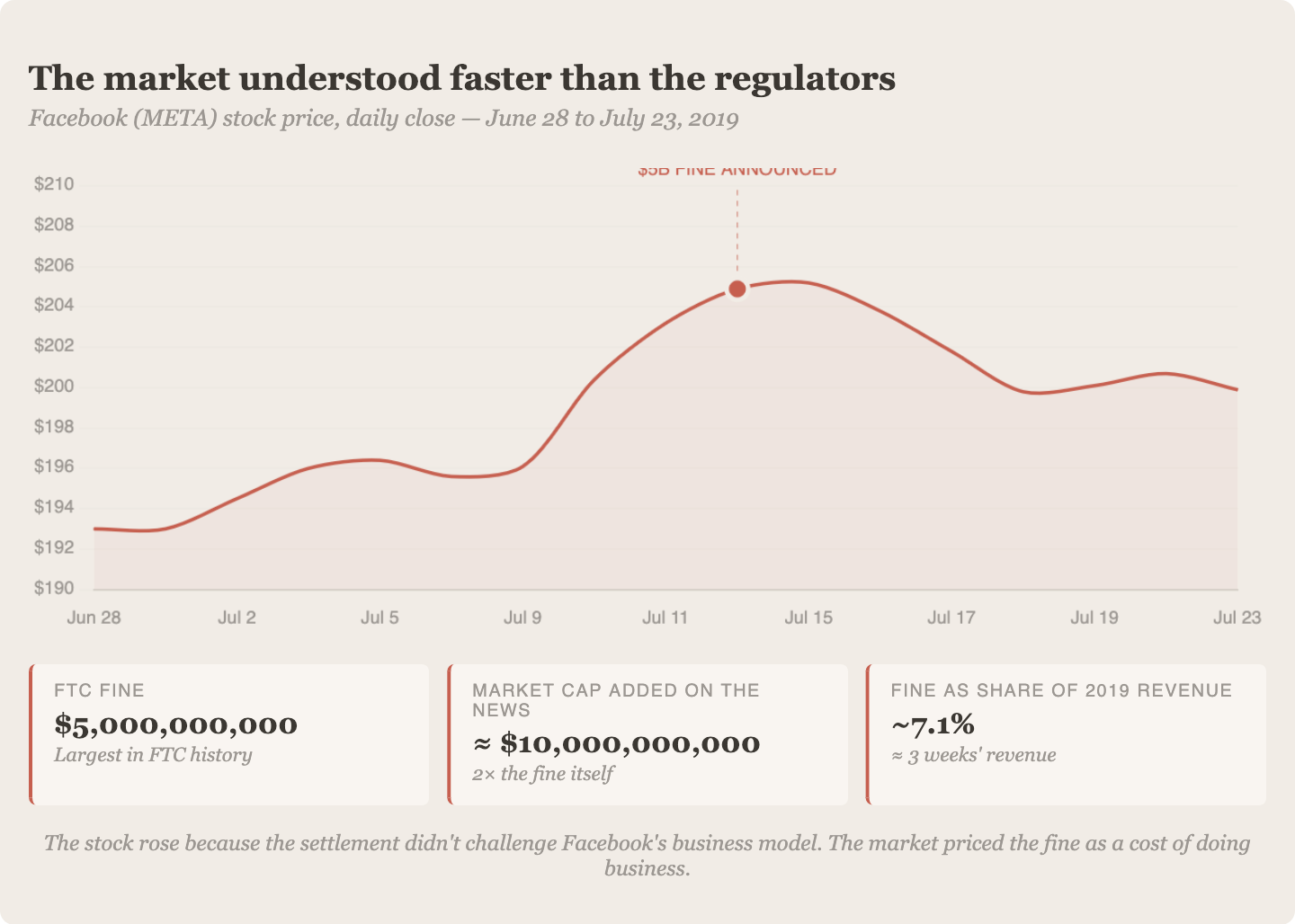

Frances Haugen's 2021 disclosures should have been the final word. Internal Facebook documents showed the company knew Instagram was damaging the mental health of teenage girls and kept writing the prescriptions anyway, a doctor who has read the side effects and decided the margins are excellent. The FTC had already fined Facebook $5 billion in 2019, roughly one month's revenue, and the company's stock price rose on the news, which tells you everything you need to know about how American enforcement works: a penalty you can pay out of petty cash isn't a punishment, it's a licensing fee for continued misbehavior, and the market understood this faster than the regulators did.

OpenAI's governance has followed the same script, a board that exists on paper while the actual decisions get made through a web of portfolio companies and venture funds the directors never approved, because oversight you can route around isn't oversight.

Senator Klobuchar described bipartisan regulation bills collapsing "within 24 hours" of introduction under lobbying pressure, the kind of speed Congress usually reserves for naming post offices. Twenty-plus years of evidence, and the United States still has no comprehensive federal social media law.

A penalty you can pay out of petty cash isn't a punishment, it's a licensing fee for continued misbehavior, and the market understood this faster than the regulators did.

Failure is the usual word for it, regulators too slow, too captured, and lacking the technical vocabulary to keep up. But that reading assumes the system was trying to regulate and couldn't. A more honest reading, one that accounts for the lobbying infrastructure, the campaign contributions, the revolving door spinning between regulatory agencies and the companies they supposedly oversee, is simpler and worse: the system performed exactly as its incentive structure dictates.

The utilitarian machine

American deregulation has always had a philosophical engine under the hood, even when nobody bothers to pop it open and name the parts. The argument runs like this: the market, left alone, allocates resources more efficiently than government, and the wealth that follows benefits everyone, which makes deregulation economically sensible and, conveniently, morally correct, a framework that started with Friedman, got sharper with Hayek, and Washington welded into its operating system until most policy debates don't question the premise, they just argue about where to set the slider.

Social media stress-tested this philosophy with the rigor of a controlled experiment whose results nobody ordered and nobody wanted to read.

That seven of the ten most valuable corporations on earth are American technology firms is not an accident of genius. It is what happens when a country lets companies corner entire markets without antitrust intervention, operate at zero profit for a decade while eliminating competitors, and harvest user data without the sovereignty rules that would have slowed them down anywhere else. Section 230 of the Communications Decency Act is the most brazen piece of the architecture, written in 1996 when 40 million users shared roughly 300,000 websites and the most dangerous thing online was a GeoCities page that autoplayed MIDI files, giving platform companies a liability shield no other industry in any other country has ever enjoyed. It was drafted for dial-up modems. It now covers companies that can move elections.

But the utilitarian math only balances if you count the costs honestly, and the costs were a restaurant that locks the doors after you've ordered and then charges you for the fire, distributed across billions of users who never consented and couldn't exit without withdrawing from public life. A genuine utilitarian, someone actually running the numbers, would look at that distribution and see a funnel engineered to concentrate benefit at the narrow end while spraying cost across everyone standing below. The greatest good didn't reach the greatest number. It reached the smallest, with extraordinary precision.

And the American system looked at this result and concluded: do it again, faster, with AI.

The proof it's a choice

The EU exists in this story the way a control group exists in an experiment: its presence proves that the American outcome is a decision, made repeatedly, by people who benefit from making it.

Brussels looked at the same social media wreckage and responded in a way that was slow, bureaucratic, sometimes infuriatingly procedural, and recognizably the behavior of an institution that tripped over a rake, studied the rake, commissioned a report on rakes, and then built a regulatory framework to prevent future rakes, which took six years but does actually cover most rakes. Nobody would call it elegant. But elegance was never the point.

GDPR taught the EU that enforcement needs to be centralized, because Ireland's Data Protection Commission moved so slowly on Meta complaints that the word "enforcement" started to feel like it belonged in air quotes. Waiting for the harm before writing the rules means installing smoke detectors in a pile of ash. So when they proposed the AI Act in April 2021, before ChatGPT existed (a fact worth letting settle for a moment), they were writing rules for a technology that hadn't yet detonated in public, because they had learned, at considerable cost, that writing them afterward doesn't work.

When ChatGPT broke the framework in late 2022, the EU didn't abandon the project, which is what the industry wanted with the focused longing of a speeding driver who has just spotted a speed camera. They rewrote the legislation instead, a process that was messy and contentious and that Mistral and the French government lobbied against with the intensity of students who just discovered the exam covers material from the first lecture, all of which is true and none of which changes the fact that the law exists, with fines of up to 7% of global turnover, which is the kind of number that makes a general counsel's eye twitch.

Article 8 of the EU Charter of Fundamental Rights enshrines data protection as a constitutional commitment, not a policy preference or a suggestion companies might consider if the quarterly numbers leave room for it. When the economy and individual rights collide, rights win, even when winning is expensive and slow and makes European tech companies feel underdressed at a party where their American competitors showed up in boardshorts sewn from deregulation and venture capital.

You can argue this is too slow. But what you cannot argue, what the existence of the EU framework makes impossible to maintain with a straight face, is that regulation "can't keep up" with AI. One of the world's largest economies built the roof. It leaks, the permits were a nightmare, and the builders complained the whole time, but it exists, and the people underneath it are drier than the ones still standing in the open insisting that roofs are impossible.

What America chose instead

Biden's Executive Order 14110, issued October 2023, was the closest thing the country had ever had to a federal AI governance framework, and it lasted fifteen months, which is to say it lasted one election cycle, because American tech policy gets written on executive orders the way love letters get written on napkins, sincerely meant at the time and structurally incapable of surviving breakfast.

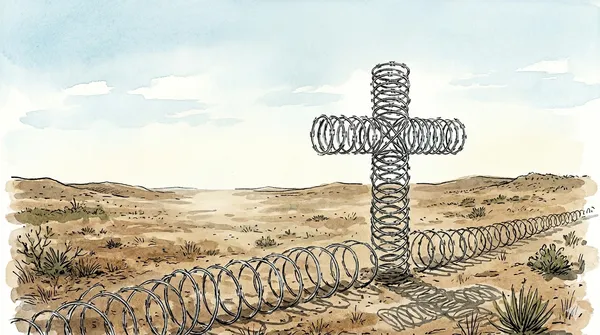

Active hostility toward the idea of regulation itself replaced it, and the distinction matters the way the distinction between forgetting to lock your door and removing the door from its hinges matters. The new administration created a DOJ task force to sue states that tried to fill the federal vacuum. Federal funding was withheld from states that dared to write their own rules. A ten-year moratorium on state AI regulation passed the House before the Senate killed it 99 to 1, briefly making the upper chamber look like a functioning institution.

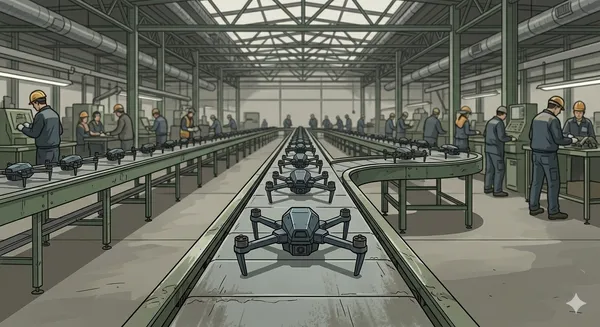

While social media was rising, tech lobbying was reactive, billions spent killing bills after introduction. The AI playbook is different. The industry stopped waiting for regulation to appear and started salting the earth where it might grow. Meta, OpenAI, and their peers have funneled hundreds of millions into political action committees and campaign contributions, and American tech companies are now fighting AI regulation in more than sixty countries, because the lesson of social media was plain enough: if you let one jurisdiction set the rules, you spend the next decade complying with them.

The AI productivity narrative gave them cover to do what they'd wanted all along, gutting headcount while investors applauded the efficiency story even as the actual productivity gains remained statistical noise, a mass layoff with better branding that hit women hardest because the metrics being used to justify retention favored the workers already holding power.

Sam Altman told lawmakers that safety regulation would be "disastrous," which is the kind of word that does a lot of work when spoken by someone whose company's valuation depends on the regulation not arriving. The industry's other favorite argument, that regulation means falling behind China, collapses on contact with the evidence: China went from draft to binding generative AI regulation in four months, requiring outputs to uphold "socialist core values," which tells you everything about the system's priorities, but the speed tells you something the American framing needs you not to notice. The "China race" argument launders a domestic policy choice as a national security imperative, wrapping what is functionally a gift to shareholders in a flag and calling it freedom.

A democracy stripping its own citizens of regulatory protection to compete with an authoritarian state starts, after a while, to resemble the thing it claims to oppose. China regulates fast by bypassing deliberation, opposition, and judicial review. The US deregulates fast by bypassing deliberation, opposition, and judicial review. The methods point in opposite directions, but if you're the citizen watching your government decide your welfare is negotiable, the view from where you're standing looks disturbingly the same.

The machine is working

Nobody is being fooled anymore, not with the Haugen documents and the Cambridge Analytica timeline and the stock price that went up on the day of the fine sitting right there in the public record for anyone who wants to look at it. The story that regulation "can't keep up" was always a misdirection, blaming the weather for a house you chose not to build a roof on.

You watched the social media experiment run for two decades and you saw who paid the costs and who counted the money, and now the same companies that ran that experiment are asking you to trust them with something more powerful, more pervasive, and harder to undo, wearing the same expression they wore last time, the one that says "trust us, this time will be different" with the practiced warmth of someone who has said it often enough to know it still works. The only question AI is going to settle, whether governments get around to asking it or not, is whether the democracy you live in thinks you are a citizen to be protected or a cost to be externalized, and whether you're prepared to keep quietly accepting whichever answer your government has already chosen for you.

The pattern keeps repeating because the survivors of each round of cuts learn the same lesson, that pushing back is how you end up in the next batch, so the internal opposition that might have stopped the worst decisions gets quietly filtered out until what remains is a yes-machine that ships whatever the exec wants regardless of whether the underlying technology can actually deliver what the marketing promised.

Sources

- Facebook–Cambridge Analytica data scandal — Cambridge Analytica harvesting 87 million users' data, Zuckerberg's April 2018 congressional testimony, FTC $5 billion fine (2019). Wikipedia

- Section 230, Communications Decency Act (1996) — full text. Cornell Law Institute

- Senator Klobuchar on tech lobbying collapsing bipartisan bills "within 24 hours." CNBC

- US social media regulation overview — no comprehensive federal law. Ascend / Thentia

- EU Meta fine, €1.2 billion (May 2023) — GDPR enforcement and social media regulation. European Parliamentary Research Service

- GDPR history and timeline. European Data Protection Supervisor

- EU AI Act — proposed April 2021, revised for generative AI, penalties up to 7% of global turnover. Wikipedia; European Commission

- EU Charter of Fundamental Rights, Article 8 — data protection as constitutional right. EU Agency for Fundamental Rights

- Biden Executive Order 14110 (October 2023) — AI governance framework, revoked January 20, 2025. Wikipedia

- Trump "Ensuring a National Policy Framework for AI" executive order (December 11, 2025) — DOJ task force, federal funding withholding, state law preemption. White House; DLA Piper

- Ten-year moratorium on state AI regulation — passed House, rejected 99–1 by Senate. Ropes & Gray; DLA Piper

- Big Tech lobbying and Super PACs against AI regulation. Nemko Digital; SOMO

- US tech companies fighting AI regulation in 60+ countries. Rest of World

- Sam Altman testimony and state AI safety laws. MIT Technology Review

- China generative AI regulation — draft April 2023, finalized July 2023, effective August 15, 2023. White & Case; China Briefing; Library of Congress